It’s time for another rant. As you might be well aware of, I’ve been a big fan of the Korean video editing app KineMaster (available for Android and iOS/iPadOS) which was the first video editor on Android that could actually be described as fairly „advanced“ – at least when judging it by mobile app standards. It launched in December 2013 and I still remember praising it in a presentation at the original MoJoCon event in Dublin in 2015. The UI was absolutely brilliant for touch screen use, it had a rich set of features to work with and it was also widely available for basically all Android devices. But ever since the original lead engineer and some team members left the company in 2017, development of the app has become very sluggish and mostly disappointing. Even darker clouds in terms of user experience have been accumulating over the last months…

READ MORECategory: English

Remember the 3.5mm headphone jack? You know, the port on your phone where you put the cable of your headphones in before Bluetooth headphones became all the rage? Given all the differences between Android phones and iPhones, both in terms of hardware and software, this was, for quite a while, a somewhat unifying factor. For the mobile content creation community this meant that you could use certain external mics (like the original iRig Mic) with both kinds of phones. Then Apple and in its wake many/most others smartphone makers decided to get rid of the headphone jack and rely on a sole physical port for accessory hardware connections: the Lightning port (Apple) or a USB-C port (Android phones). While we’re still waiting for the iPhone to finally give up its proprietary Lightning port and switch to the universal USB-C, I found a little something on the software side that works the same on both mobile platforms. It’s something lots of people might not even be aware of and those who do may not know what it’s actually about. But it’s useful and interesting.

READ MORE

The first smartphone I ever owned was a Samsung S3 Mini. When I purchased it in 2013 I didn’t really think about the phone’s potential for video production. I just wanted to finally step into the world of touch screen phones with mobile internet, without paying a premium price for an iPhone. It was only after spending some time with the lil’ Samsung that I became more and more interested in seeing the device’s potential for more than just taking calls, browsing the web and snapping some pics. My next phone was, interestingly enough, a Nokia 920 running the Windows Phone operating system. I was very much aware of the sparse app situation on Microsoft’s platform but intrigued by Nokia’s camera hardware (Zeiss lens) and the support for 25fps in the native camera app. Since the WindowsPhone app store didn’t really improve much and it became obvious very soon that the platform was not going to stick around much longer, I kept looking for an Android phone brand that would strike a chord with me.

Continue readingWhile Australian company Blackmagic Design (BMD) might best be known for its affordable cinema camera line-up (it all started with the Blackmagic Cinema Camera and Blackmagic Pocket Cinema Camera in 2012/13), they have also established a reputation in the realm of video post production. Their color grading software DaVinci Resolve (available for both Windows and MacOS) can be considered a veritable industry standard used by professionals all over the world.

READ MOREPreface

Last year, I hosted for the first time an article on this blog that wasn’t written by myself but by BBC Academy mobile journalism (“MoJo”) trainer Marc Blank-Settle whom I have met on several occassions and keep constantly in touch with via Twitter. His yearly insights into every new iteration of Apple’s mobile operating system iOS from a journalist’s point of view have become a much respected staple of the community so it’s no surprise he’s done it again for iOS 16. If you are an iPhone user, you should definitely dig into this and don’t forget to follow Marc on Twitter for the latest updates or to ask him a question. I’m also using this opportunity to apologize for my own relative silence on this blog in the last months but life’s been extremely busy. Hopefully the near future will allow me again to post more content here. But for today, I’m handing things over to Marc Blank-Settle. – Florian from smartfilming.blog

READ MORE

Android has no lack of capable mobile video editing solutions (as can be seen in this earlier article) but there is one app that’s still missing when looking over at the iOS side of things: LumaFusion. All in all, it’s the most advanced video editor across mobile platforms and with its feature set (almost) matching viable desktop NLEs, it’s been a favorite among professionals and enthusiasts – it can even be used with M1 Macs as a desktop software now. So many Android users have been anxiously asking the question: When will LumaFusion make it to Android?

Read onThe good thing about numbering your blog posts is that it’s easy to figure out when you have an anniversary coming up… 😉 And now’s the time! To be honest, I wasn’t really sure I would get that far when I started smartfilming.blog in the summer of 2015 with my first articles in German. I had made my initial steps in the blogosphere in 2009 writing about something completely different but discontinued the project two years later when I realized my interest in the topic was fading. Well, I have shown more stamina this time around: 6 years and 50 blog posts! (in case you want to check out an overview of the previous 49 click here) So what to do for this happy occasion?

Read OnPreface

So far, all the blog posts on smartfilming.blog were written by myself. I’m happy that for the very first time I’m now hosting a guest post here. The article is by Marc Blank-Settle who works for the BBC Academy as a smartphone trainer and is highly regarded as one of the top sources for everything “MoJo” (mobile journalism), particularly when it comes to iPhones and iOS. His yearly round-up of all the new features introduced with the latest version of Apple’s mobile opearting system iOS has become a go-to for journalists and content creators. iOS 15 just came out, so without further ado, I’ll leave you to Marc’s take on the new software for iPhones and don’t forget to follow him on Twitter! – Florian – smartfilming.blog

Read onOne of the things that always surprised me about Apple’s mobile operating system iOS (and now also iPadOS) was the fact that it wasn’t able to work with Apple’s very own professional video codec ProRes. ProRes is a high-quality video codec that gives a lot of flexibility for grading in post and is easy on the hardware while editing. Years ago I purchased the original Blackmagic Design Pocket Cinema Camera which can record in ProRes and I was really looking forward to having a very compact mobile video production combo with the BMPCC (that, unlike the later BMPCC 4K/6K was actually pocketable) and an iPad running LumaFusion for editing. But no, iOS/iPadOS didn’t support ProRes on a system level so LumaFusion couldn’t either. What a bummer.

Read On

Ok, today I have something a little different from the usual blog fare around here: a quick and dirty rant, maybe just a little bit tongue-in-cheek. I beg your pardon. I will only shame the deed, not name any perpetrators. You will probably have come across it and either noticed it consciously or subconsciously. Most likely on YouTube. There’s also a good chance you might disagree with what I am about to say. So be it. Now what am I talking about?

Read On

It’s the dog days of summer again – well at least if you live in the northern hemisphere or near the equator. While many people will be happy to finally escape the long lockdown winter and are looking forward to meeting friends and family outside, intense sunlight and heat can also put extra stress on the body – and it makes for some obvious and less obvious challenges when doing videography. Here are some tips/ideas to tackle those challenges.

Read on

Ask anyone about the weaknesses of smartphone cameras and you will surely find that people often point towards a phone’s low-light capabilities as the or at least one of its Achilles heel(s). When you are outside during the day it’s relatively easy to shoot some good-looking footage with your mobile device, even with budget phones. Once it’s darker or you’re indoors, things get more difficult. The reason for this is essentially that the image sensors in smartphones are still pretty small compared to those in DSLMs/DLSRs or professional video/cinema cameras. Bigger sensors can collect more photons (light) and produce better low light images. A so-called “Full Frame” sensor in a DSLM like Sony’s Alpha 7-series has a surface area of 864 mm2, a common 1/2.5” smartphone image sensor has only 25 mm2. So why not just put a huge sensor in a smartphone?

Read On

Rode just recently released the Wireless GO II, a very compact wireless audio system I wrote about in my last article. One of its cool features is that you can feed two transmitters into one receiver so you don’t need two audio inputs on your camera or smartphone to work with two external mic sources simultaneously. What’s even cooler is that you can record the two mics into separate channels of a video file with split track dual mono audio so you are able to access and mix them individually later on which can be very helpful if you need to make some volume adjustments or eliminate unwanted noise from one mic that would otherwise just be “baked in” with a merged track. There’s also the option to record a -12dB safety track into the second channel when you are using the GO II’s “merged mode” instead of the “split mode” – this can be a lifesaver when the audio of the original track clips because of loud input.

Read On

Australian microphone maker RØDE is an interesting company. For a long time, the main thing they had going for them was that they would provide an almost-as-good but relatively low-cost alternative to high-end brands like Sennheiser or AKG and their established microphones, thereby “democratizing” decent audio gear for the masses. Over the last years however, Rode grew from “mimicking” products of other companies to a highly innovative force, creating original products which others now mimicked in return. Rode was first to come out with a dedicated quality smartphone lavalier microphone (smartLav+) for instance and in 2019, the Wireless GO established another new microphone category: the ultra-compact wireless system with an inbuilt mic on the TX unit. It worked right out of the box with DSLMs/DSLRs, via a TRS-to-TRRS or USB-C cable with smartphones and via a 3.5mm-to-XLR adapter with pro camcorders. The Wireless GO became an instant runaway success and there’s much to love about it – seemingly small details like the clamp that doubles as a cold shoe mount are plain ingenuity. The Interview GO accessory even turns it into a super light-weight handheld reporter mic and you are also able to use it like a more traditional wireless system with a lavalier mic that plugs into the 3.5mm jack of the transmitter. But it wasn’t perfect (how could it be as a first generation product?). The flimsy attachable wind-screen became sort of a running joke among GO users (I had my fair share of trouble with it) and many envied the ability of the similar Saramonic Blink 500 series (B2, B4, B6) to have two transmitters go into a single receiver – albeit without the ability for split channels. Personally, I also had occasional problems with interference when using it with an XLR adapter on bigger cameras and a Zoom H5 audio recorder.

Now Rode has launched a successor, the Wireless GO II. Is it the perfect compact wireless system this time around?

Read On

I’ve already written about Camera2 API in two previous blog posts (#6 & #10) but a couple of years have passed since and I felt like taking another look at the topic now that we’re in 2021.

Just in case you don’t have a clue what I’m talking about here: Camera2 API is a software component of Google’s mobile operating system Android (which basically runs on every smartphone today expect Apple’s iPhones) that enables 3rd party camera apps (camera apps other than the one that’s already on your phone) to access more advanced functionality/controls of the camera, for instance the setting of a precise shutter speed value for correct exposure. Android phone makers need to implement Camera2 API into their version of Android and not all do it fully. There are four different implementation levels: “Legacy”, “Limited”, “Full” and “Level 3”. “Legacy” basically means Camera2 API hasn’t been implemented at all and the phone uses the old, way more primitive Android Camera API, “Limited” signifies that some components of the Camera2 API have been implemented but not all, “Full” and “Level 3” indicate complete implementation in terms of video-related functionality. “Level 3” only has the additional benefit for photography that you can shoot in RAW format. Android 3rd party camera apps like Filmic Pro, Protake, mcpro24fps, ProShot, Footej Camera 2 or Open Camera can only unleash their full potential if the phone has adequate Camera2 API support, Filmic Pro doesn’t even let you install the app in the first place if the phone doesn’t have proper implementation. “adequate”/”proper” can already be “Limited” for certain phones but you can only be sure with “Full” and “Level 3” devices. With some other apps like Open Camera, Camera2 API is deactivated by default and you need to go into the settings to enable it to access things like shutter speed and ISO control.

Read On

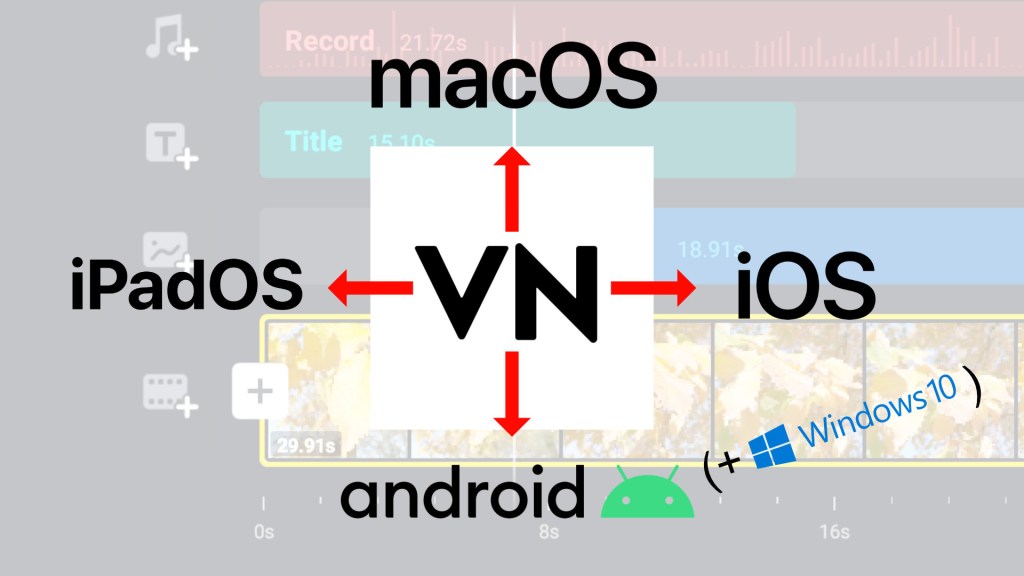

As I have pointed out in two of my previous blog posts (What’s the best free cross-platform mobile video editing app?, Best video editors / video editing apps for Android in 2021) VN is a free and very capable mobile video editor for Android and iPhone/iPad and the makers recently also launched a desktop version for macOS. Project file sharing takes advantage of that and makes it possible to start your editing work on one device and finish it on another. So for instance after having shot some footage on your iPhone, you can start editing right away using VN for iPhone but transfer the whole project to your iMac or MacbookPro later to have a bigger screen and mouse control. It’s also a great way to free up storage space on your phone since you can archive projects in the cloud, on an external drive or computer and delete them from your mobile device afterwards. Project sharing isn’t a one-way trick, it also works the other way around: You start a project using VN on your iMac or MacbookPro and then transfer it to your iPhone or iPad because you have to go somewhere and want to continue your project while commuting. And it’s not all about Apple products either, you can also share from or to VN on Android smartphones and tablets (so basically every smartphone or tablet that’s not made by Apple). What about Windows? Yes, this is also possible but you will need to install an Android emulator on your PC and I will not go into the details about the procedure in this article as I don’t own a PC to test. But you can check out a good tutorial on the VN site here.

Read On

Let’s be honest: Despite the fact that phone screens have become increasingly bigger over the last years, they are still rather small for doing some serious video editing on the go. No doubt, you CAN do video editing on your phone and achieve great results, particularly if you are using an app with a touch-friendly UI like KineMaster that was brilliantly designed for phone screens. But I’m confident just about every mobile veditor would appreciate some more screen real estate. Sure, you can use a tablet for editing but tablets aren’t great devices for shooting and if you want to do everything on one device pretty much everyone would choose a phone, right?

Read On

One of the big reasons why Android has such an overwhelming dominance as a mobile operating system on a global scale (around 75% of smartphones world wide run Android) is that you basically have a seamless price range from the very bottom to the very top – no matter your budget, there’s an Android phone that will fit it. This is generally a very good thing since it allows everyone on this planet to participate in mobile communication, not just if you have deep pockets. But as many of us would agree, smartphones are not pure communication devices anymore, you can also use them to actively create content. In this respect, Android phones are bringing the power of storytelling to the people and could therefore be regarded as an invaluable asset in democratizing this mighty tool. But if you CAN get a (very) cheap Android phone, SHOULD you get one?

Read On

There are times when – for reasons of privacy or even a person’s physical safety – you want to make certain parts of a frame in a video unrecognizable so not to give away someone’s identity or the place where you shot the video. While it’s fairly easy to achieve something like that for a photograph, it’s a lot more challenging for video because of two reasons: 1) You might have a person moving around within a shot or a moving camera which constantly alters the location of the subject within the frame. 2) If the person talks, he or she might also be identifiable just by his/her voice. So are there any apps that help you to anonymize persons or objects in videos when working on a smartphone?

Read On

Ever since I started this blog, I wanted to write an article about my favorite video editing apps on Android but I could never decide on how to go about it, whether to write a separate in-depth article on each of them, a really long one on all of them or a more condensed one without too much detail or workflow explanations, more of an overview. So I recently figured there’s been enough pondering on this subject and I should just start writing something. The very basic common ground for all these mobile video editing apps mentioned here is that they allow you to combine multiple video clips into a timeline and arrange them in a desired order. Some might question the validity of editing video on such a relatively small screen as that of a smartphone (even though screen sizes have increased drastically over the last years). While it’s true that there definitely are limitations and I probably wouldn’t consider editing a feature-length movie that way, there’s also an undeniable fascination about the fact that it’s actually doable and can also be a lot of fun. I would even dare to say that it’s a charming throwback to the days before digital non-linear editing when the process of cutting and splicing actual film strips had a very tactile nature to it. But let’s get started…

Read On

Recent Comments